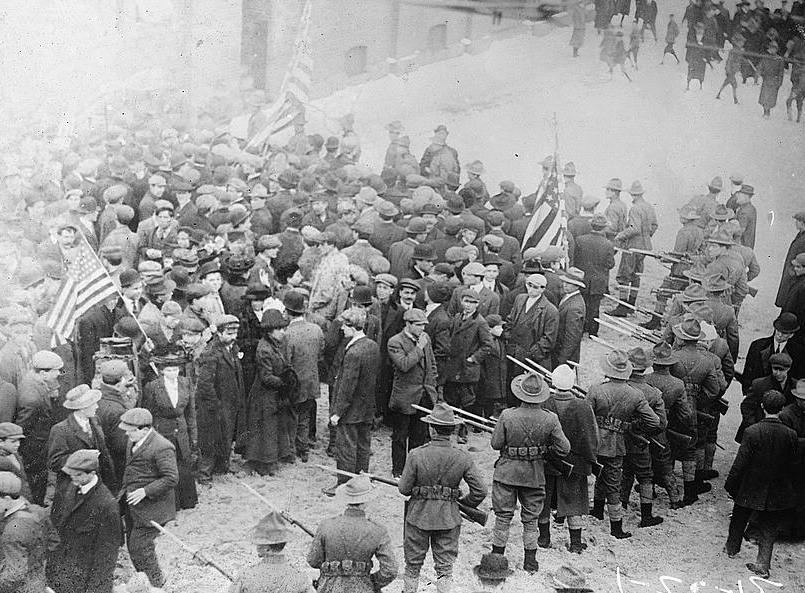

Here is a succinct and insightful comment, from Hacker News user AlisdairO, on the trend toward technology handling every kind of labor that can possibly be delegated to it:

The sad reality is that there’s a nontrivial chunk of the populace that isn’t able to pick up highly skilled roles. It also ignores the role of unskilled jobs in providing space for people whose job class has been destroyed and need to retrain (or mark time until retirement).

I’m not advocating slowing innovation to prevent job loss. I am advocating avoiding magic thinking (‘there’s always new jobs to go to’): we need to start a serious conversation about what we do with our society when we have the levels of unemployment we can expect in an AI-shifted world. Right now we’re trending much more towards dystopia than utopia.

I’m going to get around to the dystopian futurism part, but first, a long digression about intelligence! It’s a divisive topic but an important one.

Sometimes I get flack for saying this, but here goes: The average person is not very smart. Your intellect and my intellect probably exceed the average, simply by virtue of being interested in abstract ideas. We’re able to understand those ideas reasonably well. Most people aren’t. Remember what high school was like?

There’s that old George Carlin quip: “Think of how stupid the average person is, then realize that half of them are stupider than that.” This is not a very PC thing to talk about, especially because so many racists justify their hateful worldview with psychometrics. But it’s cruel to insist that everyone has the same level of ability, when that is clearly not true in any domain.

You and I may not be geniuses — I’m certainly not — but we have the capacity to be competent knowledge workers. Joe Schmo doesn’t. He may be able to do the kind of paper-pushing that is rapidly being automated, but he can’t think about things on a high level. He doesn’t read for fun. He can’t synthesize information and then analyze it.

That doesn’t mean that Joe Schmo is a bad person — if he were a bad person, we wouldn’t care so much that the economy is accelerating beyond his abilities. The cruel truth is that Joe Schmo is dumb. He just is. AFAIK there is no way to change this.

I hate that I have to make this disclaimer, and yet it’s necessary: I’m not in favor of eugenics. In theory selective breeding is a good idea, but I can’t think of a centrally planned way for it to be implemented among humans that wouldn’t be catastrophically unjust.

Also, while raw intellect may correlate with good decision-making, it doesn’t ensure it. Peter Thiel’s IQ is likely higher than mine, but I don’t want him to run the world. (Tough luck for me, I guess.) As Harvard professor and economist George Borjas told Slate:

Economic outcomes and IQ are only weakly related, and IQ only measures one kind of ability. I’ve been lucky to have met many high-IQ people in academia who are total losers, and many smart, but not super-smart people, who are incredibly successful because of persistence, motivation, etc. So I just think that, on the whole, the focus on IQ is a bit misguided.

It’s also notable that similarly high-IQ people disagree with each other often.

And now back to the topic of technological unemployment!

The two main responses to concerns along the lines of “all the jobs will disappear” are:

- Universal basic income, yay!

- No they won’t, look what happened after the Industrial Revolution!

The counterargument to universal basic income is, as Josh Barro put it:

UBI does nothing to replace the sense of reward or purpose that comes from a job. It gives you money, but it doesn’t give you the sense that you got the money because you did something useful. […] The robots have not taken our jobs yet. It is not time to surrender to a social change that is likely to further destabilize a world that is already troubled.

The counterargument to the Industrial Revolution parallel is that AI — alternately called machine learning, or automation, if you prefer those terms — is different. Andrew Ng is the chief scientist at Baidu, and this is what he told the Wall Street Journal:

Things may change in the future, but one rule of thumb today is that almost anything that a typical person can do with less than one second of mental thought we can either now or in the very near future automate with AI.

This is a far cry from all work. But there are a lot of jobs that can be accomplished by stringing together many one-second tasks.

And then there are concerns about general AI, which I don’t want to get into here.

If you’re curious about my opinion, it’s this: We’re in for a difficult couple of decades. Most hard problems can’t be solved quickly.

Tachikoma artwork by Abisaid Fernandez de Lara.